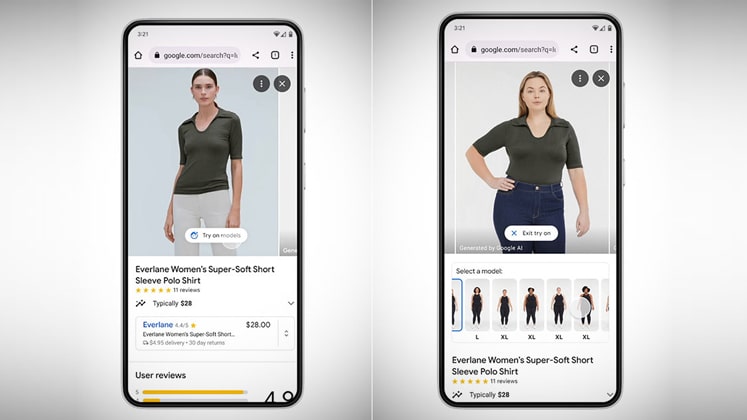

A brand-new shopping tool from Google will display clothing on a roster of real-life fashion models.

The Google virtual try-on tool for clothing uses a photograph of the item to attempt to forecast how it will drape, fold, cling, stretch, and create wrinkles and shadows on a set of real models in various poses. It is one of a number of changes to Google Shopping that will launch in the coming weeks.

A novel diffusion-based model that Google internally created powers virtual try-on. The text-to-art generators Stable Diffusion and DALL-E 2 are examples of diffusion models. Diffusion models learn to gradually remove noise from a starting image that is wholly noisy, getting it closer, step by step, to a target.

Now, US customers can virtually try on women’s clothing from brands like Anthropologie, Everlane, H&M, and LOFT using Google Shopping. On Google Search, people can look for the new ‘Try On’ badge. The year’s end will see the release of men’s tops.

“When you try on clothes in a store, you can immediately tell if they’re right for you,” said Lilian Rincon, senior director of consumer shopping product at Google.

Google is introducing filtering options for apparel searches that are powered by AI and visual matching algorithms at the same time as the debut of virtual try-on. Users can utilise the filters, which are available under product listings on Shopping, to focus their store searches by entering criteria like colour, style, and pattern.